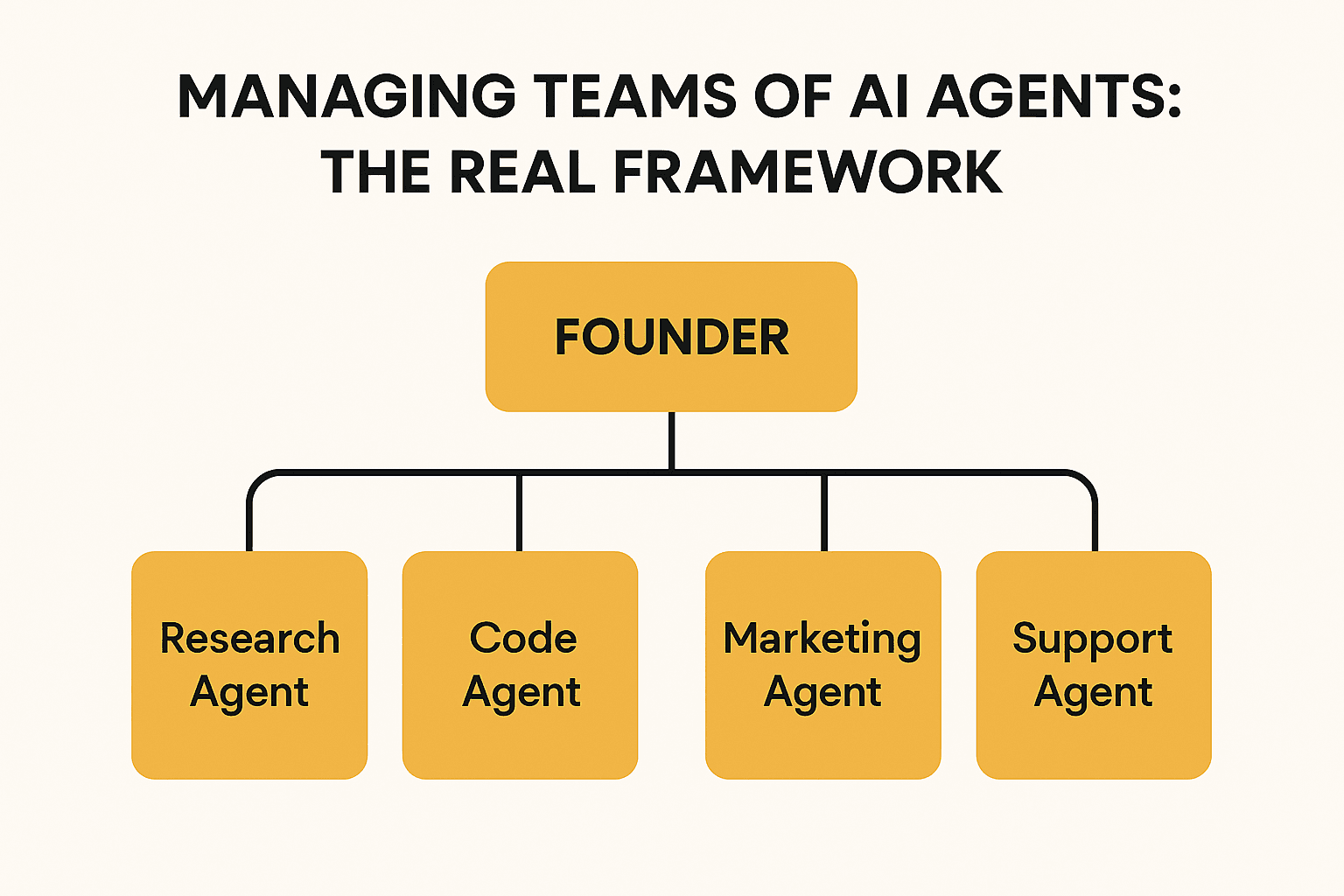

How Solo Founders Are Managing Teams of AI Agents (The Real Framework)

TL;DR: Solo founders are running companies with AI agent teams doing marketing, research, code review, and customer support. The ones doing it well treat agents exactly like employees: clear role definitions, specific instructions, performance monitoring, and a willingness to fire agents that aren't delivering. Here's the actual framework - roles, prompts, review cycles, and when to scale up or cut.

I spent three hours going through every public account of a solo founder running an AI agent team after a post by @KSimback went viral with 170K views and 1.7K bookmarks. The post described managing AI agents the way you manage a team - with hiring criteria, performance reviews, and firing decisions. The engagement made sense: this is the thing every vibe coder is trying to figure out, and almost nobody has written about it at the level of actual practice.

What I found: the founders doing this well share a specific pattern. They're not running 40 generic AI agents in a tangled loop. They're running 3 to 8 specialized agents with clear boundaries, specific context, and regular review cycles. The difference between a working AI team and a chaos machine is almost entirely in the setup - the prompts, the role definitions, and how agents hand off to each other.

This is what that looks like.

Why AI Agent Teams Are the Solo Founder's Leverage Play

A solo founder in 2026 is competing against teams. Not in product quality - AI has compressed that gap. In output volume. In the ability to do marketing research, content creation, code review, customer support, and competitor monitoring simultaneously, without a full-time hire for each.

A defense-tech founder documented building a 15-agent "council" to help run his company - replacing roles that would normally require multiple hires with specialized agents handling specific tasks. A million-dollar solo founder published how she runs her entire marketing operation with 40 agents across posting, SEO monitoring, social listening, and competitor analysis.

The pattern isn't "replace your team with AI." It's "build the team you could never afford." Solo founders at $10K MRR can't hire a marketing team. They can build one.

But there's a trap. More agents without structure creates noise, not output. Every founder who has tried to run 10 agents without clear separation of concerns has ended up with contradictory recommendations, agents stepping on each other's work, and a system that requires more babysitting than the task would have taken manually.

The framework below prevents that.

The Three-Layer AI Agent Stack

Before defining specific roles, you need to understand the structure. Effective AI agent teams follow a three-layer model.

Layer 1: The Orchestrator One agent - or you - that receives final outputs and makes decisions. The orchestrator doesn't do the work. It coordinates, reviews, and decides what to act on. If you're the solo founder, you're often this layer. Some founders delegate orchestration to an agent as well, but this creates a single point of failure you have to monitor closely.

Layer 2: Specialists Agents with narrow, well-defined jobs. A research agent. A content agent. A customer feedback agent. A code review agent. Each specialist knows its domain deeply and hands off cleanly to the orchestrator or to another specialist as defined in its instructions.

Layer 3: Executors Agents that perform specific tasks within a specialist's workflow. A content agent might spawn an executor to draft a tweet thread, another to write a meta description, another to check for brand voice consistency. Executors are one-shot agents with a narrow job and a clear deliverable.

Most solo founders start at Layer 2 and never need Layer 3. Start there.

Defining Roles That Actually Work

The biggest mistake in building an AI agent team is vague role definitions. "Marketing agent" is not a role. "Content calendar agent that plans posts for the week based on our ICP research file, brand voice guide, and the three high-performing posts from last month" is a role.

Every agent needs four things in its definition:

- What it does - specific, narrow, not overlapping with another agent

- What context it has - which files, documents, or data it has access to

- What it produces - a specific output format you can act on

- Who it hands off to - the next agent or you

Here's what a working agent team looks like for a typical solo SaaS founder:

Research Agent Role: Monitor ICP communities (Reddit, X, Discord servers) for problems, questions, and product feedback relevant to [your product category]. Produce a weekly summary: top 5 pain points mentioned, notable user language, and one emerging problem not on the product roadmap. Context: ICP definition file, product roadmap, community list. Output: Markdown report, weekly. Hands off to: You, for prioritization decisions.

Content Agent Role: Write 5 X posts per week based on the research agent's weekly summary and our brand voice guide. Each post should connect directly to one pain point from the research report. Context: Brand voice guide, last month's top-performing posts, research agent's weekly report. Output: 5 drafted posts in plain text. Hands off to: You, for review and scheduling.

Customer Feedback Agent Role: Every Monday, pull all support tickets from the last 7 days. Categorize by: bug report, feature request, onboarding confusion, praise. Identify the top 3 most common complaints and the top 3 most common requests. Context: Support ticket system access, product changelog. Output: Categorized report with frequencies and direct quotes. Hands off to: You, for sprint prioritization.

Code Review Agent Role: Review every PR before it's merged. Check for: security issues, obvious performance problems, deviations from the existing code style in the repository. Do not approve or reject - flag issues with specific line numbers and reasoning. Context: Repository, code style guide. Output: Inline comments + summary note. Hands off to: You, for final merge decision.

That's four agents. That's a team. More than that and you're managing the management.

How to Write Prompts That Don't Fail

Agent teams break at the prompts. The instruction quality determines the output quality more than the model you're using.

Three rules for agent prompts that work:

Rule 1: Define what the agent should NOT do as clearly as what it should do. The Content Agent should not make product claims that aren't in the approved messaging doc. The Research Agent should not synthesize trends across weeks - it should report only what it found in the last 7 days. Guardrails prevent agents from filling gaps with hallucinations.

Rule 2: Give the agent a failure mode. "If you cannot find enough content to fill the weekly report, return an empty report with a note explaining why, rather than extrapolating." Agents that know what to do when they don't know tend to fail gracefully instead of silently producing bad output.

Rule 3: Show an example of the output format. If your Research Agent should produce a Markdown report with specific headings, include a blank template in the prompt. Agents follow format examples far more reliably than format descriptions.

The Weekly Review Cycle

Running an AI agent team without a review cycle is how you end up three months in with agents that have drifted from their original intent, producing outputs nobody reads.

Weekly review takes 30 minutes. The questions:

- Did each agent produce its expected output this week?

- Was the output actually used? If not, why?

- What was the most wrong thing an agent produced?

- Does any agent's prompt need updating based on what failed?

One pass through these questions weekly catches 80% of the drift before it compounds. If an agent's output went unused three weeks in a row, that agent either needs a role redefinition or a deletion.

When to Fire an Agent

Not every agent earns its place. Fire an agent when:

The output requires more correction than it would take to do the task manually. If you're spending 45 minutes editing the Content Agent's 5 posts, you're better off writing them yourself.

The agent produces outputs that contradict another agent. Your Research Agent says users want feature X. Your Customer Feedback Agent never mentions feature X. One of them has bad context or a bad prompt - figure out which and fix it, or consolidate the roles.

The task only needs to happen occasionally, not on a schedule. Don't run a weekly Competitor Research Agent if you only need competitor analysis twice a year. Run it as a one-shot when you need it.

The model hallucination rate on this task is too high. Some tasks are not well-suited to current AI capabilities. Code review works. Predicting user behavior doesn't. If you're spending more time verifying the output than using it, it's the wrong task for an agent.

The Luka Connection: Direction Before Delegation

Here's the thing about AI agent teams that nobody says clearly enough: they multiply your focus, good or bad. If you're focused on the right problem, AI agents accelerate your progress significantly. If you're focused on the wrong problem, AI agents automate the wrong thing faster.

A Content Agent writing five posts a week about your product's features is useless if the thing actually blocking your growth is activation - users signing up but never reaching the moment where the product clicks. Your agents are executing perfectly. You're just pointed at the wrong stage of the funnel.

Luka sits above all the execution tools. It connects your product's real data - Google Analytics, Sentry, App Store Connect, social signals - correlates signals across sources, and tells you which growth lever actually needs your attention today, matched to where your product is right now. Not which lever is interesting. Which one is the actual bottleneck.

You check Luka in the morning. You know what to work on. Then you close it and tell your agents what to execute. That's the whole loop - Luka sets the direction, your agents execute it, you review results and repeat.

Automation without direction is automating the wrong thing faster. Luka is the direction layer that makes your agent team actually productive.

Apply This Today

If you want to start running an agent team this week, here's the minimum viable version:

Day 1: Define your single highest-value task that repeats every week. For most solo SaaS founders, that's either customer feedback synthesis or content creation. Pick one.

Day 2: Write the agent definition: what it does, what context it has, what it produces, failure mode instructions, output format example.

Day 3: Run the agent. Review the output. Identify the single biggest failure in the output.

Day 4: Fix the prompt based on that failure. Run again.

Day 5: If the output is better than what you'd produce in the same time, keep the agent. If not, either the task or the prompt needs more work.

Don't build four agents before you have one working well. The discipline of getting one right is what makes the next three successful.

Frequently Asked Questions

How many AI agents should a solo founder run?

Start with one. Add a second when the first is reliably producing output you actually use. Most effective solo founder agent teams run 3 to 6 specialized agents. Beyond that, the management overhead starts competing with the productivity gains.

What's the best model for running agent teams?

It depends on the task. Claude performs well on tasks requiring nuanced reasoning - content, research synthesis, code review. GPT-4o is fast and cost-effective for high-volume structured tasks. Use the most capable model for your most critical agent, and cheaper models for routine executions.

How do I prevent agents from contradicting each other?

Define clear boundaries between agent roles and build in explicit handoff points. If two agents have overlapping domains, merge them into one agent with a broader scope. Contradiction is usually a sign of overlapping responsibilities, not a model problem.

Do I need specialized tools to run an AI agent team?

No. You can run effective agent workflows with a combination of Claude, a simple automation tool like n8n or Zapier, and a document store for shared context. Specialized multi-agent frameworks like LangChain or CrewAI add capability but also add complexity - start simple.

How long does it take to set up a working agent team?

A single well-defined agent can be set up and tested in a few hours. Getting it reliable enough to run without daily supervision usually takes one to two weeks of iteration on the prompt and context. A team of three agents is a month of setup time if you're doing it properly.

About the Author