How to Manage AI Agents Like a Team

TL;DR: Most solo founders run AI agents chaotically. The answer is treating agents like employees: define roles, set performance standards, run reviews, and fire underperformers.

I have spent the last three months running a team of AI agents. Fifteen agents handling everything from research to content to code review to distribution. Managing them as a system is what separates builders who get compounding leverage from agents.

The Problem With Running Agents Like Tools

The way most people use AI agents is broken. They think of agents as: you write a prompt, the agent does a thing, you get a result. Repeat forever.

Agents need job descriptions, not just prompts. Agents need performance reviews. Agents need to be fired.

The Three-Phase Agent Management Cycle

Phase 1: Hiring

Before you bring an agent onto a task, you need to know what you are hiring for.

What is the agent domain scope? An agent writing blog posts has a different scope than an agent writing cold emails.

What is the input format? What does the agent receive as context?

What is the output contract? What does success look like?

What is the escalation path? When the agent encounters something outside its scope.

Phase 2: Leveling

Tier 1 — New Agent: Full human review every output. Tier 2 — Provisional: Spot checks on 1 in 3 outputs. Tier 3 — Trusted: Exception-based review only. Tier 4 — Core Team: Fully autonomous, quarterly audit.

Phase 3: Performance Reviews

Task Completion Rate, Escalation Quality, Output Consistency, Context Recall, Domain Boundary Compliance.

The Agent You Should Fire First

The general-purpose research agent. Fire the generalist. Hire two specialists.

Common Mistakes

- Prompt stacking instead of role definition

- Rewarding outputs instead of behaviors

- Never demoting or firing agents

- No escalation path defined

- Context window hoarding

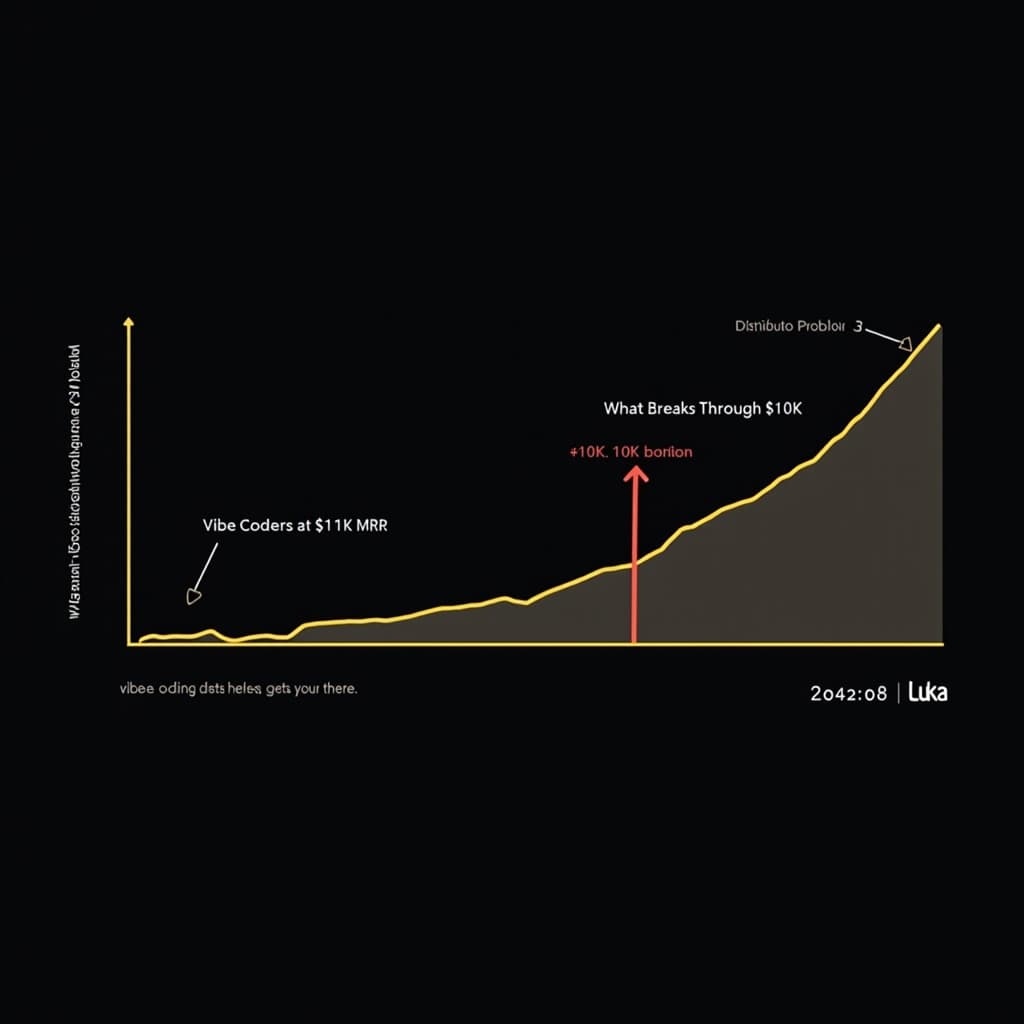

The Compounding Effect

A Tier 4 agent that has been running for four months produces better output than a Tier 1 agent on day one, because the context patterns, output contracts, and quality standards are deeply embedded.

Start with one agent. Run the audit. Define the role. Set the standards. Get it to Tier 2. Then do the next one.